OAK RIDGE, Tenn., May 23, 2018—Scientists at the Department of Energy’s Oak Ridge National Laboratory are the first to successfully simulate an atomic nucleus using a quantum computer. The results, published in Physical Review Letters, demonstrate the ability of quantum systems to compute nuclear physics problems and serve as a benchmark for future calculations.

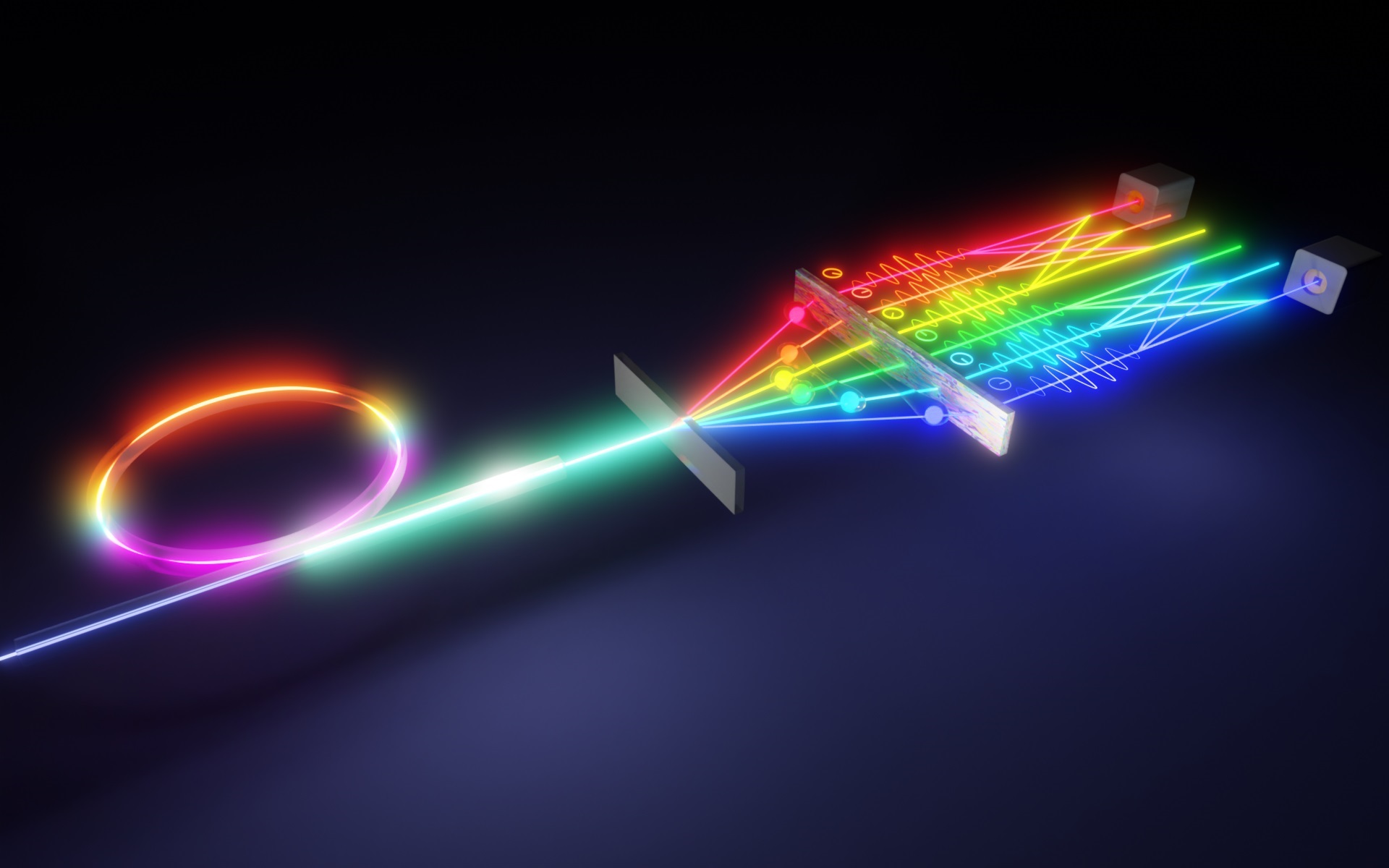

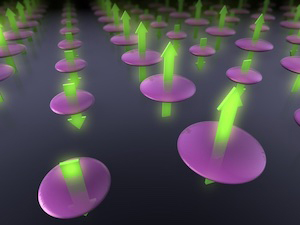

Quantum computing, in which computations are carried out based on the quantum principles of matter, was proposed by American theoretical physicist Richard Feynman in the early 1980s. Unlike normal computer bits, the qubit units used by quantum computers store information in two-state systems, such as electrons or photons, that are considered to be in all possible quantum states at once (a phenomenon known as superposition).

“In classical computing, you write in bits of zero and one,” said Thomas Papenbrock, a theoretical nuclear physicist at the University of Tennessee and ORNL who co-led the project with ORNL quantum information specialist Pavel Lougovski. “But with a qubit, you can have zero, one, and any possible combination of zero and one, so you gain a vast set of possibilities to store data.”

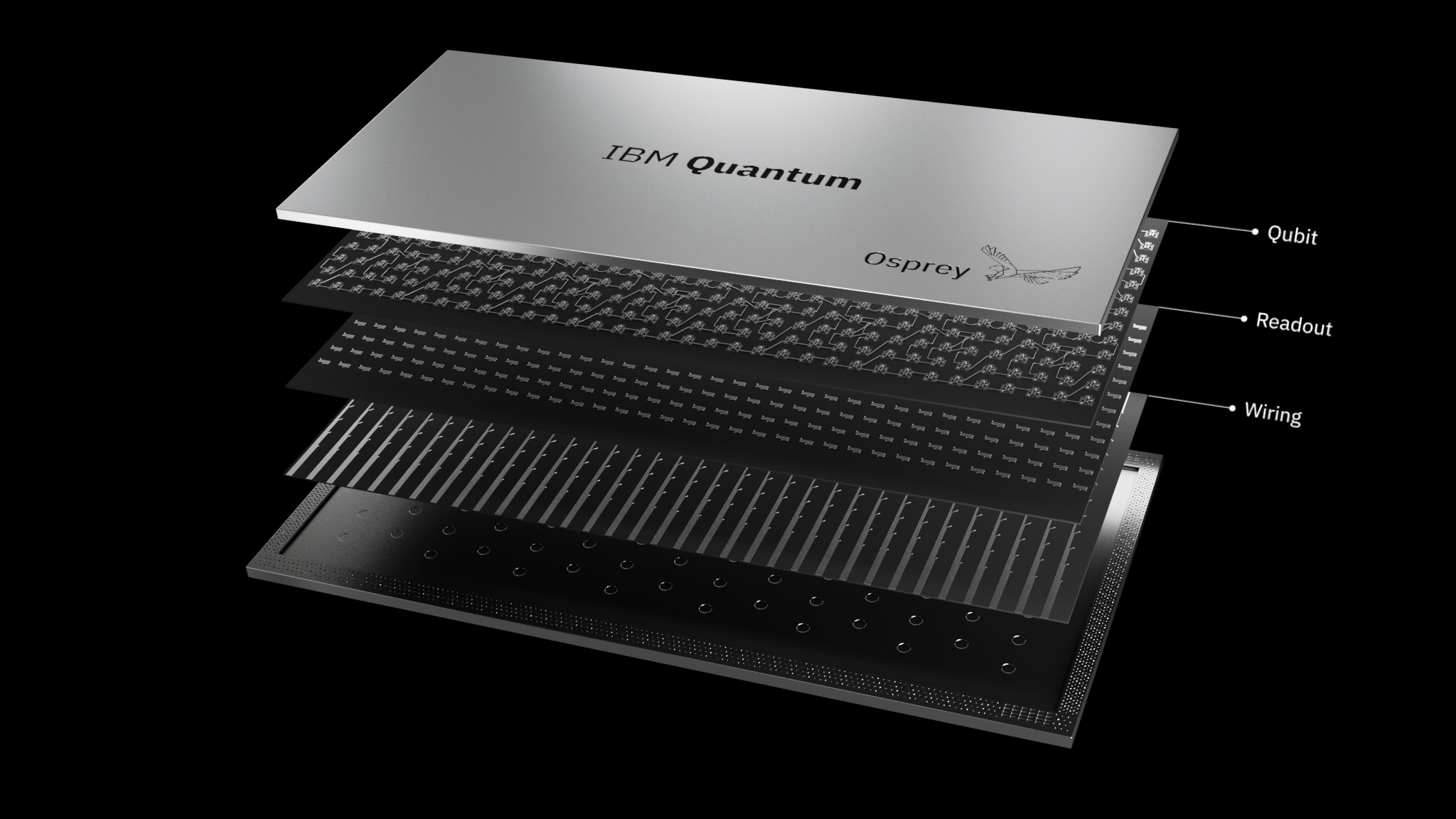

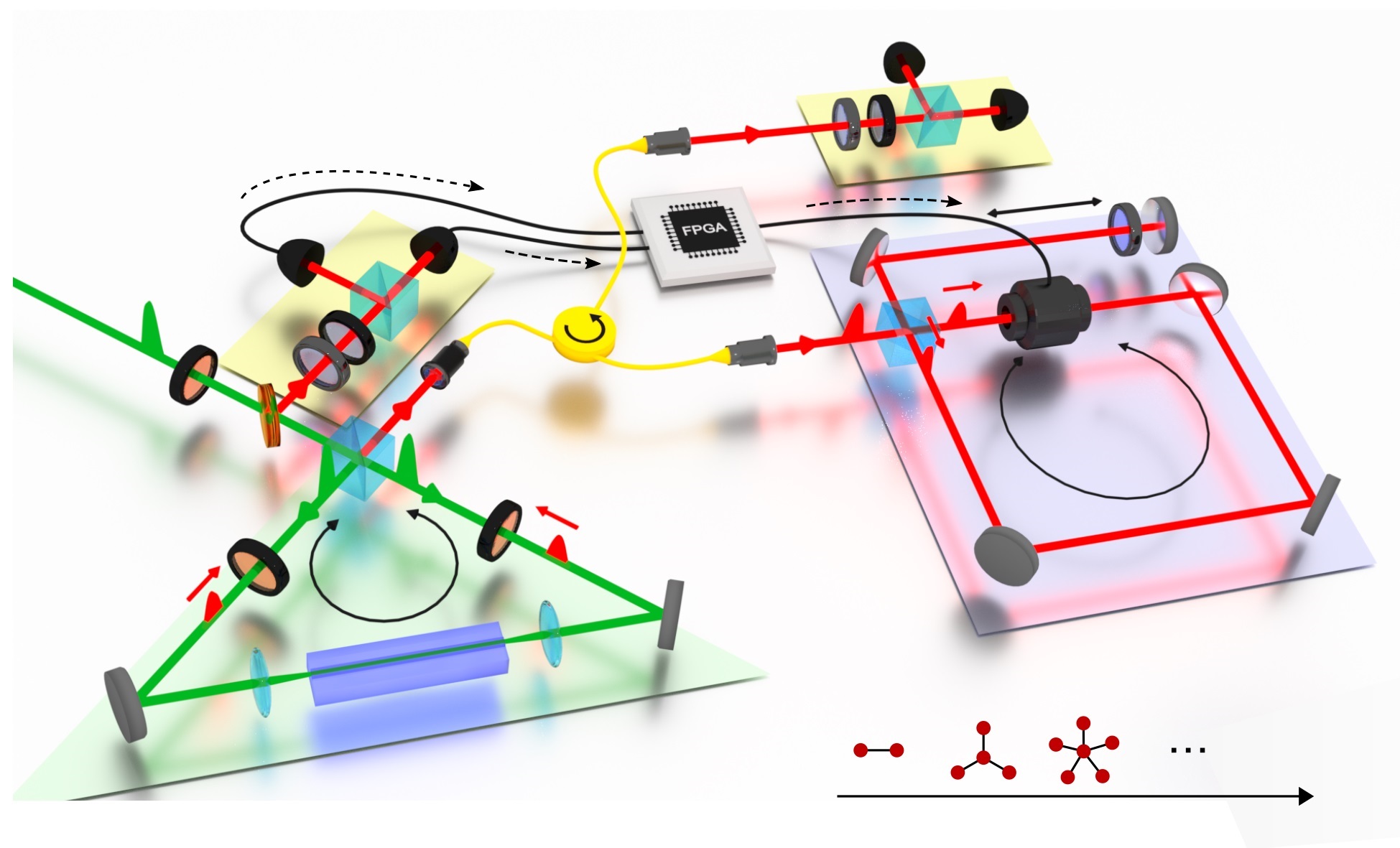

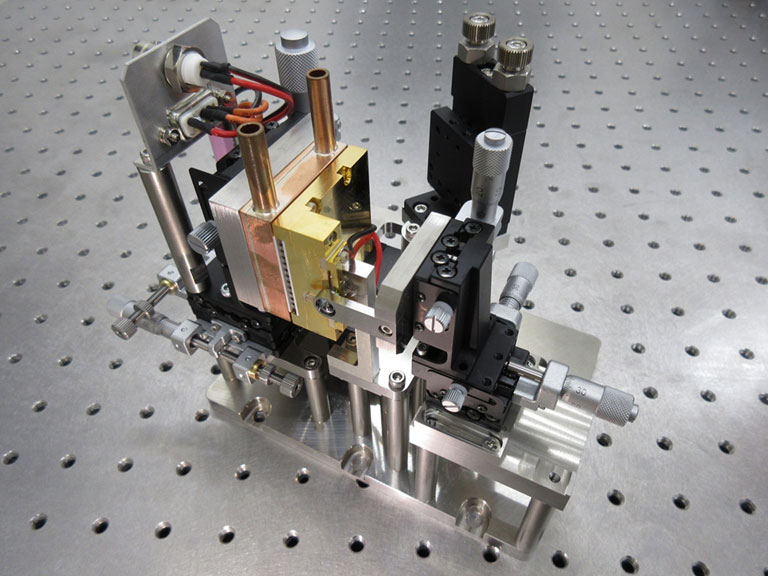

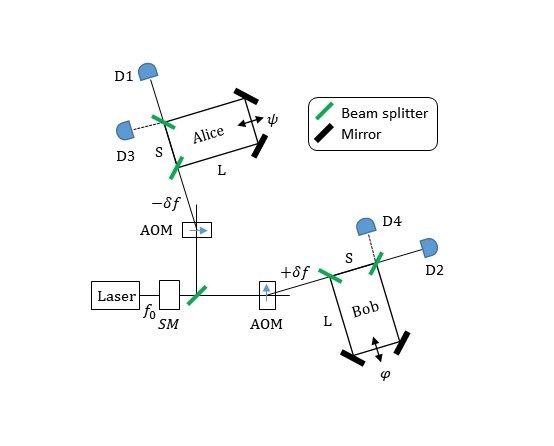

In October 2017 the multidivisional ORNL team started developing codes to perform simulations on the IBM QX5 and the Rigetti 19Q quantum computers through DOE’s Quantum Testbed Pathfinder project, an effort to verify and validate scientific applications on different quantum hardware types. Using freely available pyQuil software, a library designed for producing programs in the quantum instruction language, the researchers wrote a code that was sent first to a simulator and then to the cloud-based IBM QX5 and Rigetti 19Q systems.

The team performed more than 700,000 quantum computing measurements of the energy of a deuteron, the nuclear bound state of a proton and a neutron. From these measurements, the team extracted the deuteron’s binding energy—the minimum amount of energy needed to disassemble it into these subatomic particles. The deuteron is the simplest composite atomic nucleus, making it an ideal candidate for the project.

“Qubits are generic versions of quantum two-state systems. They have no properties of a neutron or a proton to start with,” Lougovski said. “We can map these properties to qubits and then use them to simulate specific phenomena—in this case, binding energy.”

A challenge of working with these quantum systems is that scientists must run simulations remotely and then wait for results. ORNL computer science researcher Alex McCaskey and ORNL quantum information research scientist Eugene Dumitrescu ran single measurements 8,000 times each to ensure the statistical accuracy of their results.

“It’s really difficult to do this over the internet,” McCaskey said. “This algorithm has been done primarily by the hardware vendors themselves, and they can actually touch the machine. They are turning the knobs.”

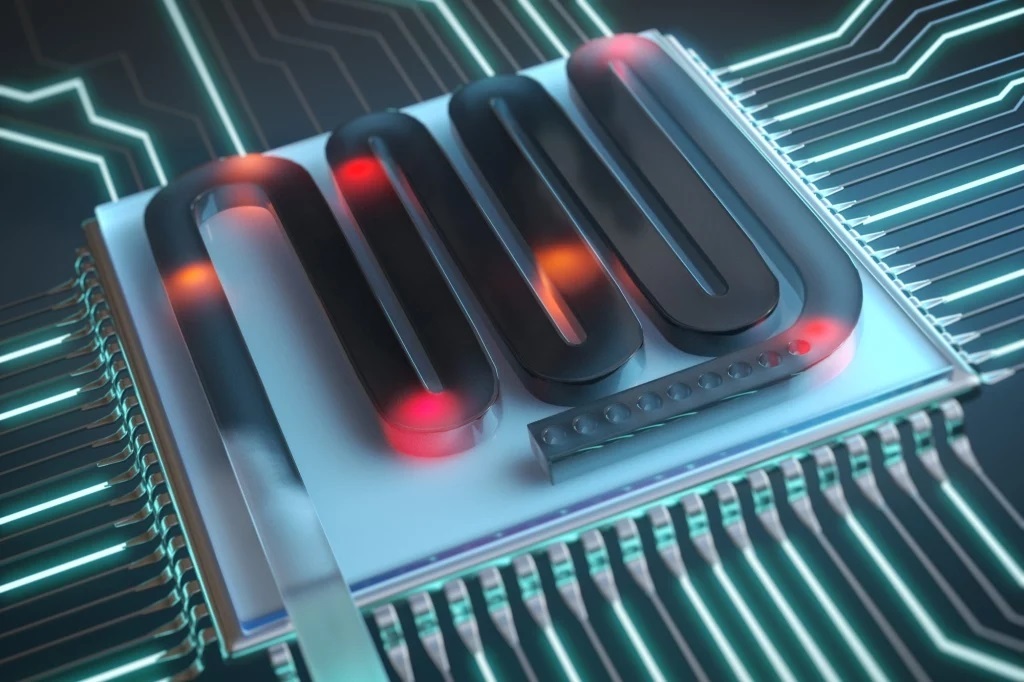

The team also found that quantum devices become tricky to work with due to inherent noise on the chip, which can alter results drastically. McCaskey and Dumitrescu successfully employed strategies to mitigate high error rates, such as artificially adding more noise to the simulation to see its impact and deduce what the results would be with zero noise.

“These systems are really susceptible to noise,” said Gustav Jansen, a computational scientist in the Scientific Computing Group at the Oak Ridge Leadership Computing Facility (OLCF), a DOE Office of Science User Facility located at ORNL. “If particles are coming in and hitting the quantum computer, it can really skew your measurements. These systems aren’t perfect, but in working with them, we can gain a better understanding of the intrinsic errors.”

At the completion of the project, the team’s results on two and three qubits were within 2 and 3 percent, respectively, of the correct answer on a classical computer, and the quantum computation became the first of its kind in the nuclear physics community.

The proof-of-principle simulation paves the way for computing much heavier nuclei with many more protons and neutrons on quantum systems in the future. Quantum computers have potential applications in cryptography, artificial intelligence, and weather forecasting because each additional qubit becomes entangled—or tied inextricably—to the others, exponentially increasing the number of possible outcomes for the measured state at the end. This very benefit, however, also has adverse effects on the system because errors may also scale exponentially with problem size.

Papenbrock said the team’s hope is that improved hardware will eventually enable scientists to solve problems that cannot be solved on traditional high-performance computing resources—not even on the ones at the OLCF. In the future, quantum computations of complex nuclei could unravel important details about the properties of matter, the formation of heavy elements, and the origins of the universe.

Results from the study, titled “Cloud Quantum Computing of an Atomic Nucleus,” were published in Physical Review Letters.

The paper’s coauthors, all from ORNL, were Eugene F. Dumitrescu, Alex J. McCaskey, Gaute Hagen, Gustav R. Jansen, Titus D. Morris, Thomas Papenbrock, Raphael C. Pooser, David J. Dean, and Pavel Lougovski. Hagen, Morris, Papenbrock, and Pooser also are affiliated with the University of Tennessee, Knoxville.

The team’s research was supported by DOE’s Office of Science. ORNL is managed by UT-Battelle for DOE’s Office of Science. The Office of Science is the single largest supporter of basic research in the physical sciences in the United States and is working to address some of the most pressing challenges of our time. For more information, please visit https://science.energy.gov/.